My Perfect Green Data Centre (5) – Cooling Common Sense

There are a few simple things we can do to reduce the huge amounts of energy that data centers consume in keeping cool.

Insulation. I do not understand why the construction industry in Asia is so completely clueless about cavity walls and other ways of keeping cool air in and hot air out, but it is. So, for a start, let’s be clever in our use of construction materials. Structurally, a single-floor data centre is a shed. At least make a shed with insulated ceiling and walls.

Geothermal Piling. This is in widespread residential and industrial use in northern Europe. The ground itself is a great heatsink, and using geothermal piles to draw excess heat into the ground removes a lot of excess heat for free.

Air Containment. Computers suck cold air in through the front and blow hot air out of the back. Barely a single data centre in Thailand, for example, makes any attempt to keep the cold and hot air separate. The result is that the cooling system works overtime. This leads to huge inefficiencies, and much higher energy consumption than would otherwise be required.

Temperature: The specification says 18-27C. That means it’s safe to run the intake air at 27C. There is no need whatsoever to turn the air-con down to 21C, as happens in so many data centres. Some people may defend this by saying that, should the cooling go off due to power or other failure, it’s possible to run the equipment for longer before the room becomes so hot that it’s necessary to depower the IT equipment. True, but the amount of time it gains is seconds. In a recent experiment, UI killed the air-con: the room heated by 5C in one minute (yes, sixty seconds). So you buy about 72 seconds extra run time for that huge extra cooling cost.

Layout: Work with your tenants / clients to avoid hotspots. A single cold- or hot-air containment unit needs to be cooled for the hottest area. If one rack’s humming away at 10kW and the rest are ticking over at 2kW, the former will drive the air-flow. Fix it.

So much for colo. In any case, I suspect I’m preaching to the converted as it’s the clients who think they know everything who are the biggest problem. Here’s one for cloud in section…

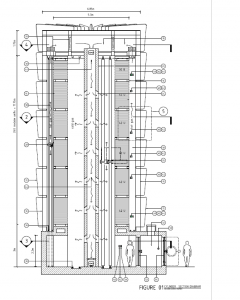

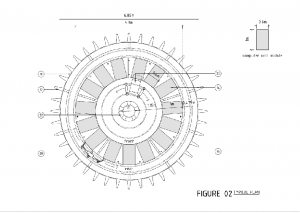

and in plan.

So, what’s going on here?

The idea is a kind of super-containment vessel which uses natural convection to assist the cold- and hot-air separation.

The first thing to note is height. The only reason our racks are 42U is because most humans can’t reach any higher. However, in a cloud environment, where the estate is almost completely homogenous, and where computers that break don’t need to be fixed – ever – three is no reason for humans to come in (at least, not to fix the computers, although specially trained technicians may need to service other stuff).

Without humans, we can stack computers much higher, and stacking higher allows us to take advantage of natural convection.

Cold air is injected down the middle of the tower. It will need to be blown, but at least cold air falls all by itself, so it will need to be blown less hard than conventional systems which blow cold air up from the floor void.

The computers are arranged in a rotunda, the fronts facing the central core and the backs facing the outer side. They take cold air in through the front and blow hot air out through the back.

The hot air rises from up the outside of the rotunda. This is an enclosed space, so the chimney effect will accelerate the hot air up, sucking hot air out of the backs of computers on the higher levels. As with the cold air injection, the hot air extraction will still require some fans, but those fans will work a lot less hard than in conventional systems.

In addition, with the hot air on the outside of the rotunda, it may be possible to dissipate some heat using heat fins or – next post – evaporative cooling. In practice, these would be on the side of the vessel facing away from the sun, and the side facing the sun would carry solar panels.

How much will this save? I don’t know; I don’t have the technical knowledge to run this through a Computational Fluid Dynamics (CFD) model. But the HVAC people I’ve shown this to concur that it’s likely to yield at least some saving, probably a few percent. But even 5% of 500 racks * 10 servers per rack at a PUE of 2 is 125,000W.

And, best of all, it will look much more interesting than the average data centre, which is to all external appearances a shed.