My Perfect Green Data Centre (7) – Photovoltaics

Back to engineering, but first a disclaimer. Whatever numbers I use in this post are wrong. Photovoltaic technology (solar panels) and battery technologies are evolving so rapidly that everything is out of date as soon as it hits the market. So although I believe myself to have got the concepts right, the calculations are intended to show the type of calculation involved, but probably over-estimate the downside and under-estimate the upside. Having said that, the numbers are not order-of-magnitude wrong. They may be 10% or 20% out, but probably not much more.

The advantages of using solar are obvious: sunlight is – at least until the oil companies get their hands on it – free. Photovoltaic (PV) panels generate DC, not AC, and most of our load is DC. That’s a single conversion from photons to DC electricity, rather than from coal (or whatever) to steam to rotational kinetic to AC (via induction in an alternator) to DC, which will be inherently more efficient.

First, the footprint. The carbon footprint of PV panels is poorly understood. All we can say is that the numerator in any calculation consists almost entirely of non-renewables, so will asymptote to infinity. However, PV panels have indefinite life spans, which makes for an equally big denominator. So, overall, the green footprint asymptotes to ∞/∞=1. This is much better than hydrocarbons, for which the numerator is infinite and the denominator – burn it once and it’s gone – is finite.

Second, space. The amount of power a PV panel can generate is the solar radiation multiplied by the efficiency of the panel. In the tropics, the solar radiation is about 1,350kW / m2. I’m not sure how efficient the latest generation is, but let’s say about 30% (a wiki article says 20% is nearer the mark, but the article seems last to have been updated in 2014). To generate 1MW of power, therefore, we need 1MW / (1,350W/m2*30%) = 2,469 m2 of space. A typical data centre budgets 4kW per rack, so 1 MW is 250 racks, and at 5m2 / rack including non-white space, that’s 1,250 m2: although we don’t get quite enough power from putting the panels on the roof, nor do we have to buy vast tracts more land to put the panels on. Cover the car park and we’re done.

So far, so good. But, as any climate denier is quick to point out, the sun doesn’t shine at nights. If we’re to keep our data centre running 24/7 we need batteries.

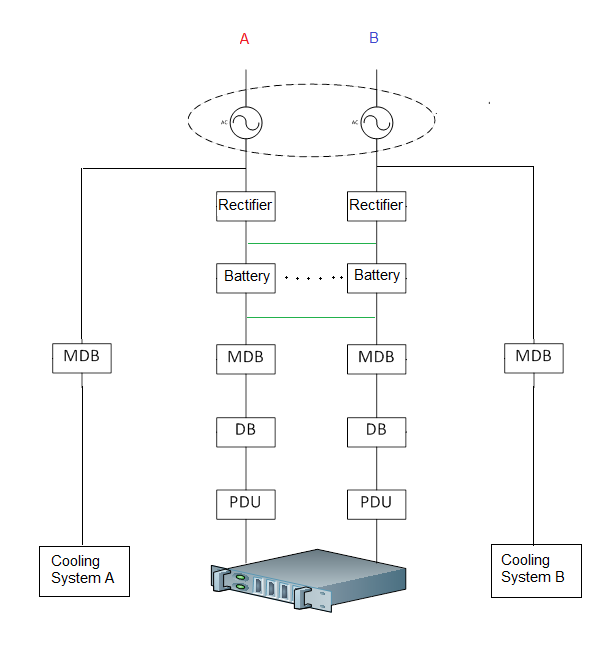

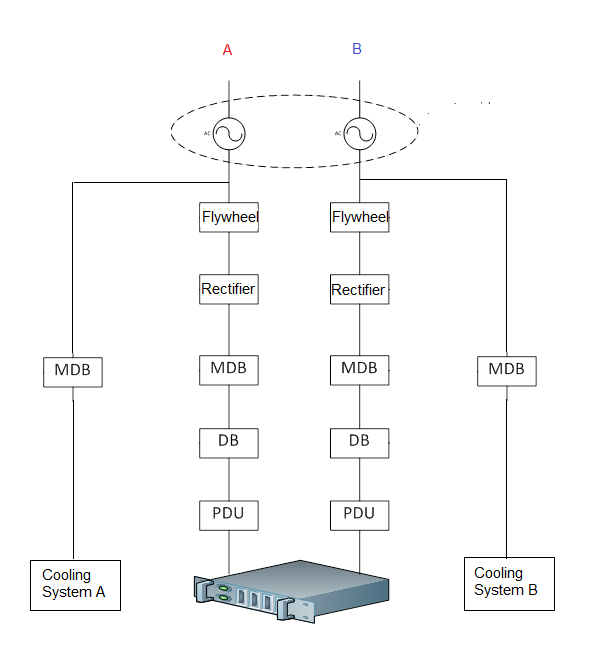

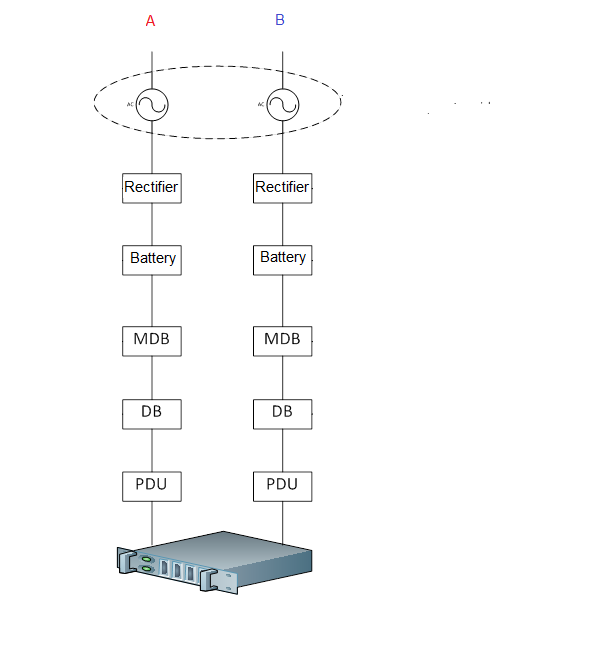

In a conventional data centre, the batteries are expected to provide power in the brief gap between the primary power dropping out and the secondary power kicking in. As a result, batteries provide power for maybe ten minutes per incident, and there’s a gap of hours, if not days or months between incidents. In normal circumstances the time it takes to recharge the batteries doesn’t matter.

This is just as well, because it takes longer to recharge batteries than to charge them. However, if we’re running of PV panels, the power drops out every night, and we need to have the batteries re-charged and ready to go in time for the next night.

There’s a handy paper here which describes the calculations for lead-acid batteries, and the data sheet for a typical industrial scale battery is here (I am not advocating or advertising this battery – it’s just the first one on Alibaba that had a full spec sheet). Here are the calculations:

This is an Excel file you can download and play with. The measure of how long it takes to recharge a battery is its efficiency, and the handy paper says that lead-acid batteries are typically 65% efficient. The spreadsheet calculates the number of batteries needed based on that. The result is 1,667 batteries per MW using the batteries in the data sheet.

These are industrial, not car batteries, and weigh 175 kg a piece. 1,667*175kg is 291 tons of lead-acid battery to keep the data centre running 24/7. Furthermore, these batteries last 10-12 years, so need to be replaced once in the life of the data centre, so that’s 582 tons – imagine 350 crushed cars. And that’s for 1MW. The average data centre is more like 10MW, so that’s 3,500 crushed cars. Quarrying, shipping and destroying 5,800 tons of lead, acid and PVC casing has a pretty significant carbon footprint. And although Lithium-ion cells are more efficient, they come at a higher carbon footprint because, where lead is commonplace, lithium is rare.

The other problem is that we need to buy enough PV panels not only to power the data centre, but also to charge the batteries. As the batteries are 65% efficient, that implies that for every Watt of power, we need an additional Watt/.65 for charging, so we need (1+1/.65) * 2,469 m2=6,267m2 of panel per MW. For 10MW, we are buying vast tracts more of land than we’d otherwise need.

And, after all of this, if there are few days in a row of heavy cloud and little sun, all of the batteries will be exhausted and all this in vain.

What this comes down to is a physical manifestation of the abstract physics I mentioned some time back, that electricity is electrons in motion, and that storing electrons when they’re not in motion but in such a way that they can quickly be, is difficult. As a result, whatever greenness we gain by using PV panels, we lose in terms of the huge footprint in manufacturing all the batteries and extra panels we need to keep the data centre light at night.

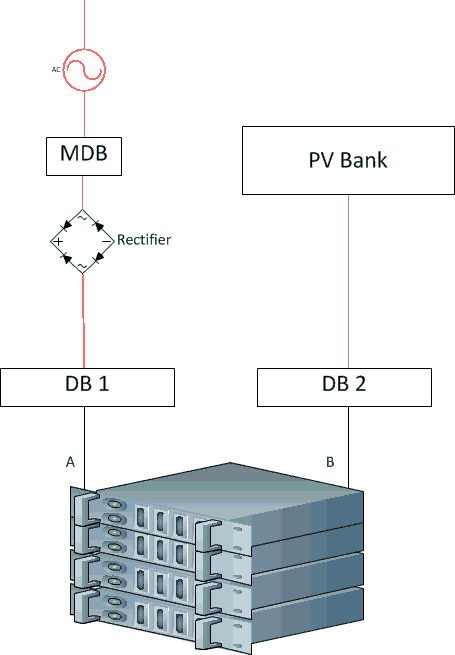

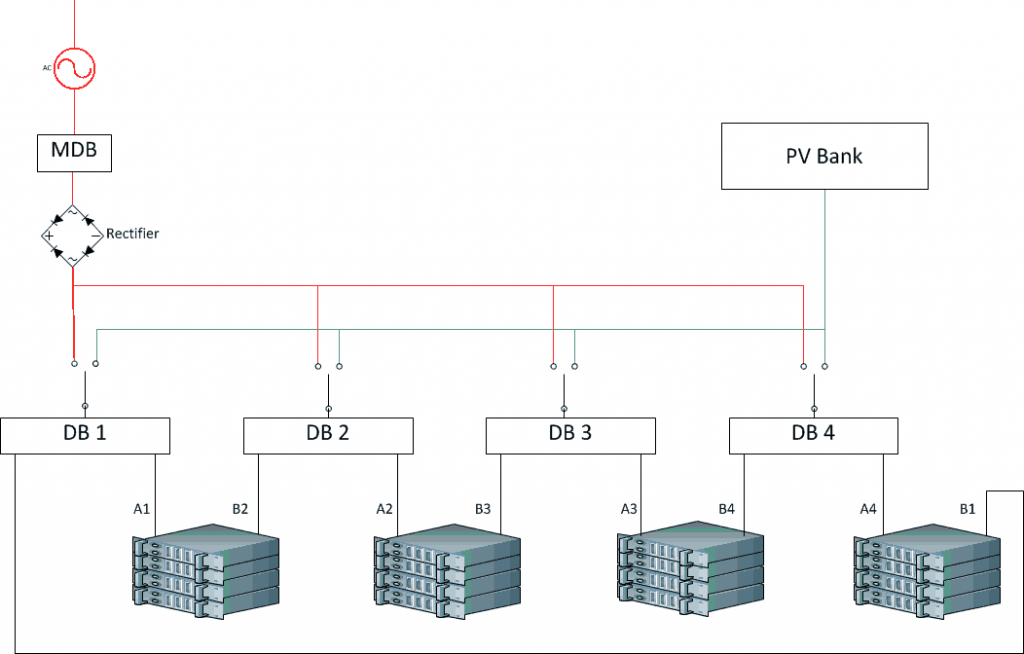

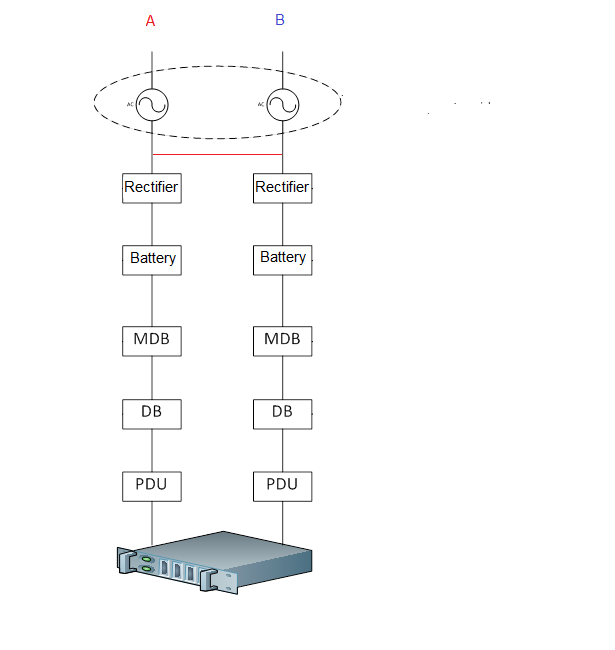

So, it seems to me that compromise is needed. We operate the DC load from solar panels during sunny days, and from other sources at night and cloudy days. In our DC-only data centre:

(If we stick with traditional AC PSUs, in the above and subsequent diagrams, remove the rectifier and put a DC-AC invertor in the PV line.)

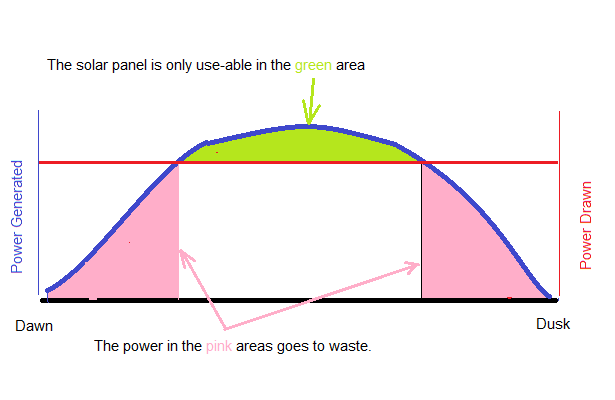

Unfortunately, this is too simple to work. At the start and end of the day, when the power available from solar cells is rapidly increasing or decreasing, even a single computer would have difficulty working out which supply to choose. This is because, unlike gen sets, which go from no power to flat-out almost instantaneously, solar panels energise in the same way that cups of water fill up. The power required by a computer is fixed, so a solar panel is useless to that computer until the panel is sufficiently energised to deliver all of the required power. If, for example, a single computer needs 350W, there’s no point in sticking 250W in the back: the computer won’t work. So we need to wait until the solar panel is fully energised before we can use it.

This means that all the power we could be using in the post-dawn and pre-dusk times goes to waste. In addition, on cloudy or rainy days, the panels may never become fully energised.

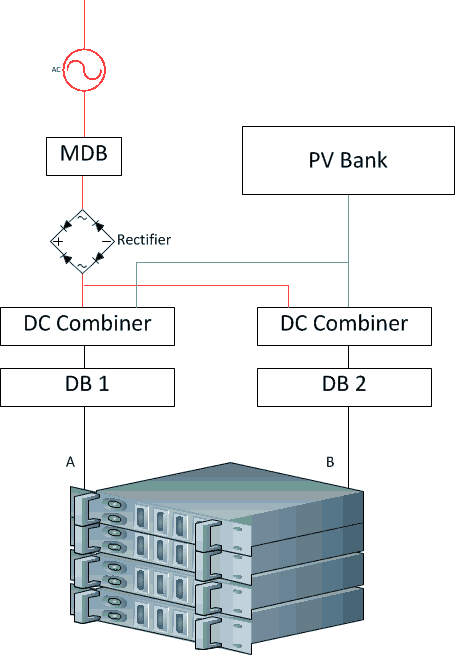

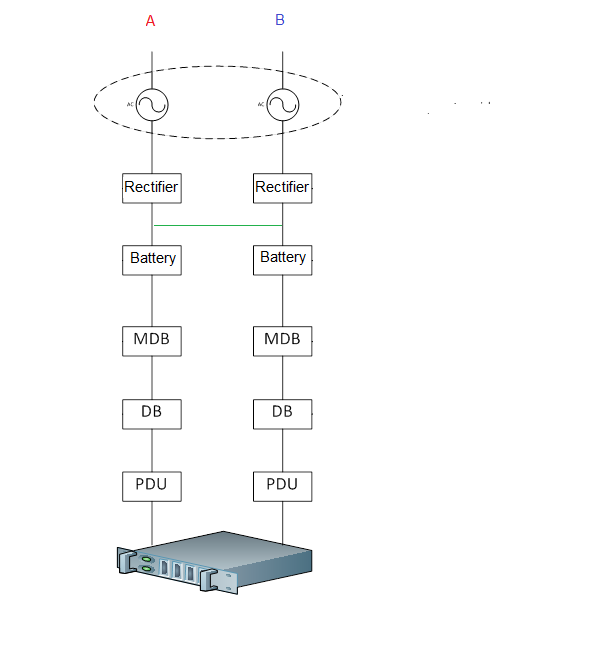

There’s a solution to this, which is called a DC combiner. This takes whatever power is available from the solar panels, and combines it with power from other sources. The resultant topology is something like this:

So each of the power rails combines power from conventional sources with solar power, and feeds that into the computers.

Unfortunately, the technology inside DC combiners has been patented (by Google amongst others – it’s nice to see my thoughts on DC are in good company). In an ideal world, owners of these patents would open source their DC combiners and we could take whatever we could get from the panels and make up the rest from other power sources.

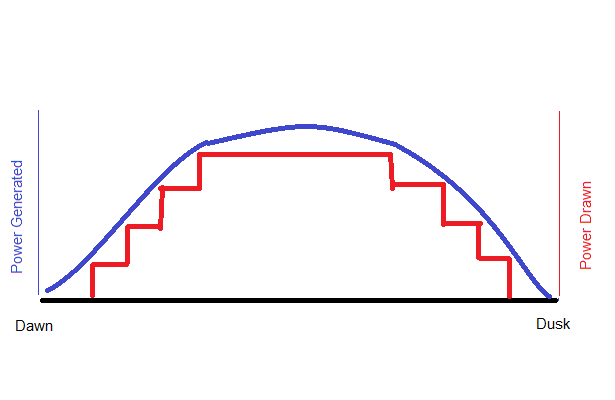

In this non-ideal world, there remains a compromise. Each time the solar panels deliver a threshold amount of power, we use it, and each time they fall below, we switch back. As a graph over the course of a day:

The smooth blue line is the total amount of power the panels generate. In and ideal (Google) world, we would draw the lot but, in our non-ideal world, we divide the DC load into evenly sized lumps – say 300kW / lump, and switch it over to solar power as and when the solar farm becomes sufficiently energised to power that lump. When the farm starts de-energising, we switch it back. On cloudy days, perhaps only 75% of the computers are solar-powered; at nights, 0% are.

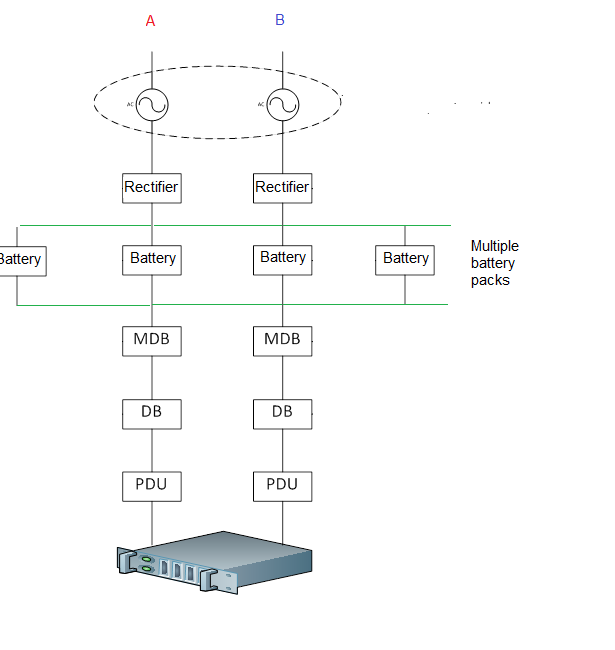

The topology to support this is:

At the bottom, I’ve divided the IT load into four evenly balanced units. As one of the sizes in which off-the-shelf distribution panels come is 300kW, let’s say that each lump of IT load draws 300kW for a total of 1.2MW.

The AC power source at the top left includes gen sets, utility power and whatever: i.e. a reliable source of AC. I haven’t expanded this as I don’t want to clutter the diagram. The AC is converted, probably by multiple redundant rectifiers, into a reliable source of DC. This reliable source is distributed to four DBs, one for each lump of IT load.

At night, all IT loads run off conventional power. As the day starts, we wait until the panels are generating 25%, or 300kW, and switch the first load over. When the panels are up to 50%, we switch the second load over and so on – and in reverse at the end of the day. If it’s cloudy, we may never switch over all four loads and, if it’s really cloudy, we have to run off conventional power all day.

There would have to be a margin of error at the switch-to and switch-from points – for a 300kW IT load, perhaps we don’t switch to solar until we have at least 350kW spare, and we switch from solar if there is less than 325kW spare.

I’ve chosen four IT loads to keep the diagram simple. In practice, the size of the DBs would determine the number of IT loads and increment. As a flourish, I’ve also included full redundant paths just to show that this approach can yield them. And, yes, if it’s a conventional data centre with PSUs in the computers, scrub the rectifier, put an invertor (DC-AC convertor) after the solar panels, and everything will still work.

Cooling

Another possibility with solar panels is to forget about the IT, and use the panels to top up the power available to the cooling system, which will work much harder during the day. To do this, we put an invertor after the solar power to turn DC to AC. Combining two AC sources is straightforward as long as they are in-phase, and the invertors can deliver in-phase AC.

Follow-the-Clock Cloud Computing

A more radical solution – especially for cloud providers – is this: don’t run the data centre at all when it’s dark. Power the whole thing down and do the computing in the next time zone to the east in the morning and the west in the evening. This is especially viable for computing used by people (you know, human beings, on their phones and PCs), as (a) most people in TZ are asleep for the first few hours of daylight, so the cloud can compute for people to the east, and the converse at the end of the day.

* * *

This the best I can do on photovoltaic panels. The real problem with photovoltaic solar panels is that the sun doesn’t shine at night, and is diminished on cloudy days, so we either need to design around that, or we store electricity. As soon as we have to store electricity, we fight physics. The next post will look at a way around that.

Previous Next