My Perfect Green Data Centre (9) – The Perfect Green Data Centre

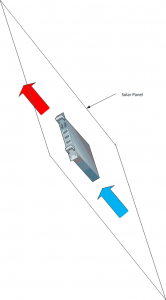

In this series, I’ve tried to unload everything that I see as being wrong about the way we think about, design and build data centres. I’ve said that my perfect green data centre is not a data centre at all, but consists of solar panels, with computers mounted on them, installed on people’s roofs. A second-best is the ultimate air containment monocoque. And, as the least good, a bodge job for colocation.

But, at a higher level, nothing I’ve said addresses the fundamental problem, which is that computers turn electricity into heat.

To some extent this will always be the case, but it needn’t be the case to the extent that it is. Nearly all servers use NMOS chips, and NMOS chews power. There’s nothing to be done about this. The basic unit of all digital technology is the transistor. NMOS requires a transistor and a resistor to create the most basic logical circuit (an invertor), and more complex functions similarly require resistors. Resistors dissipate unwanted electricity by turning it into heat. So NMOS chips necessarily turn electricity into heat.

But the computer chips that power your phone are not NMOS – and your phone battery would last minutes rather than hours, and your phone would burn a hole in your pocket if they were. The chips in your phone are CMOS. And CMOS consumes an order of magnitude less power.

This is because CMOS chips use almost no resistors. For every one transistor / resistor pair in NMOS, there will be two transistors in CMOS. Those transistors only use power at the instant a state changes, whereas resistors use power nearly half the time. (In an NMOS invertor, whenever the input is 1 and the output is 0, the resistor is active. Assuming on average that the invertor will spend equal amounts of time in both states, and the resistor’s pumping out heat half the time. In a CMOS invertor, one transistor is on and the other is off, and neither dissipates heat, so the average amount of power pumped out is close to zero. Similar cases apply to more complex functions.)

As transistors take up more space than resistors, and are more complex, CMOS packs fewer logical functions into more space. Because the construction is more complex, the yield – the proportion of correctly functioning chips coming off the production line – is lower, so CMOS are more expensive. But the power consumption is much, much lower.

Conversely, NMOS chips pack more computing into less space, and are less costly than CMOS chips of the same die size. Hence their ubiquity, especially in server grade equipment. The downside is that resistors turn electricity into heat and transistors don’t, so NMOS chips emit a great deal more power.

But the limiting factor in solar power is the insolation: the amount of solar energy that reaches the ground. This, for any given part of the world on any given day is fixed. Even if we recover 100% of that solar energy – and we never will – the 1,350W per square meter that comes from the sun in the tropics will power at most two or three off-the-shelf commodity NMOS servers. Those two or three servers will occupy much less than a square meter.

But that same 1,350W will power maybe a dozen CMOS servers. And the resultant extra density more than compensates for the fact that CMOS chips tend to be less powerful than NMOS chips.

In other words, there’s an imbalance. NMOS consumes solar energy faster than we can generate it; CMOS uses solar energy at roughly the same rate per square meter that we can generate it. And because we’re using that energy to power state changes – which is what matters - rather than to preserve states – which shouldn’t take any energy at all - we pack in far more computing into each square meter than we can with NMOS.

So the perfect green data centre consists of PV panels on people’s roofs, with CMOS servers attached, connected by wifi towers or fibre as appropriate, and with data replicated at time zones +8 and -8 hours away (or +120 and -120 degrees of longitude) so that computing follows the clock.

Or, in other words, the perfect green data centre consists of our planet Earth itself, shorn of the ugly concrete boxes that turn electricity into heat. Who’s up for building it? I’d be delighted to hear from you – chris,maden [at] cpmc.com.hk.