Having concluded in the previous post that the best data centre is no data centre, in this and the next post I’ll go for the second-best options for a cloud service and colocation data centres.

The reason I distinguish between cloud and colocation is that the IT in a cloud data centre is much more homogenous than that in a colo data centre. Where colocation tenants tend to pack their racks with all manner of stuff, cloud operators and internet service providers tend to buy thousands of identical of identical servers with identical disks, and identical network equipment. This opens possibilities.

The first is to keep humans out. Humans bring in dust, heat, moisture and clumsiness. We present a security risk. And we add a lot less to computing than we like to think.

If we are to keep humans out, what happens when things break? A simple answer, and one that I suspect will be the most cost-effective over the life-span of a cloud data centre, is “nothing.” Given the low cost of computer power, fixing a single broken server, disk or motherboard is hardly going to make a difference. That computer manufacturers no longer even quote the Mean Time Between Failures, a measure that determines the reliability of mechanical things, suggests that out of, say, 5,000 servers, 4,999 will be working just fine after five years.

If, however, we are going to insist on fixing things, how about robots?

I was told five years ago when I first thought of robotics in data centres, that robots in data centres would never work. For a colocation data centre, I came to agree. The best we can do would be to build a pair of robotic hands that are remotely operated by a human. This would keep the dust, heat and moisture out, but instead of having a weak, clumsy human bouncing off the racks, we’d have a strong, clumsy robot. On top of that, for remote, robotic hands to work, they need to be tactile, and to this day, tactile robotics costs some serious money.

But my friend Mark Hailes had a suggestion: for a cloud data centre, mount all the equipment in a standard sized tray with two power inputs and two fibre ports. Rather than have a fully-fledged robot, use existing warehouse technology to insert and remove these trays into and from the racks. There could be standard sized boxes, 1U, 2U and 4U, to accommodate different servers.

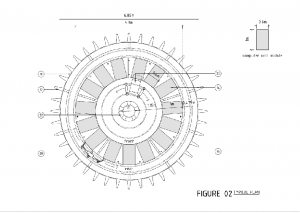

My friend Richard Couzens then inspired a further refinement, which is to have a rotunda rather than aisles and rows. This minimises the distances the robots have to move, and therefore reduces the number of moving parts, which ought to improve the reliability of the whole.

Finally, with robots doing the work, there is no particular need to stop at 42U: the computers can be stacked high.

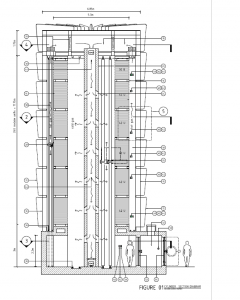

Put these together, and we get a very different layout from that of a conventional data centre:

If that looks familiar, it’s because it’s the same layout I suggested here to take advantage of natural convection currents. So here’s the whole story.

Before operations, the tower will be populated with racks which are designed to receive trays. The racks will be fully wired with power and data. Human beings (as a group of humans can work more quickly than a single robot) will fill up the trays with computers and the racks with trays. When everything’s been plugged in, the humans leave. The entire tower is then sealed, the dirty air pumped out, and clean air pumped in. The tower will use evaporative cooling; that will be switched on and the computers powered up.

We only supply DC power. There is no AC in the tower, and consequently no high voltages. The robot (which is more of a dumb waiter than a cyborg) can be powered using compressed air or DC motors – that’s something for a robotics guru.

The tower is designed to be run for many years without human incursion. If something in a tray breaks, the robot will retrieve the tray and deliver it to the airlock, where a person picks it up and takes it away for repair or disposal. People outside the data centre will pack computer equipment into trays, insert the trays into an airlock at the bottom of the tower, from whence a robot will pick the tray up, transport it to its final location, and insert it.

When it’s time to replace the entire estate, the tower is depowered and humans can empty it out and build a new estate.

The robot uses the central core of tower. All it has to do is move up, down, around in circles and backwards and forwards. These are simple operations but nonetheless, mechanical stuff can break and does require preventative maintenance. When not in use, the robot therefore docks in an area adjacent to the airlock, so it can be inspected without humans entering the tower. As a last resort, if the robot breaks when in motion, or if something at the back of the racks breaks, a human can get in, but must wear a suit and breathing apparatus. That may sound extreme, but the tower will be hot and windy.

It may also be filled with non-breathable gas. I’m told that helium has much better thermal qualities than natural air, and filling the tower with helium would not only keep humans out, but would also obviate the need for fire protection. The lights (LED lighting, of course) would be off unless there’s a need for them to be on, and a couple of moveable cameras can provide eyes for security and when things go wrong.

Each tower would be self-contained. Multiple towers could be built to increase capacity. Exactly how close they could be, I don’t know. But this is a design that achieves a very high density of computer power in a very small area: that makes it suitable for places where land is expensive.

Last but not least, in this most aesthetically-challenged of industries, these towers would look really cool. So: Google, Facebook, Microsoft: run with it!

Leave a Reply

You must belogged in to post a comment.